AI Emotion Detection in Videos

Full Breakdown + a Free AI Tool to Design the Visuals

AI emotion detection in videos has moved from research labs into mainstream marketing, UX research, education, content moderation, and customer support analytics — and the products built around AI emotion detection in videos all need the same family of visuals: hero dashboards with bounding boxes and confidence bars, character emotion sheets, sentiment timelines, marketing infographics, and feature grids. This page is a complete 2026 breakdown of AI emotion detection in videos covering accuracy, use cases, compliance, and the visual design language that makes these products legible and trustworthy. Below, generate every dashboard mockup, character emotion sheet, and marketing graphic you need for an AI emotion detection in videos launch — free, powered by Nano Banana Pro.

Design Visuals for AI Emotion Detection in Videos — Free

Whether you are building an AI emotion detection in videos product, writing a launch landing page, or pitching a sentiment analysis tool — every screen needs the right visuals. Generate dashboard mockups, character emotion sheets, sentiment timelines, and marketing graphics here in seconds.

AI Emotion Detection Visual Generator

Generate dashboard mockups, character emotion sheets, sentiment timelines, use case infographics, audience heatmaps, and feature grids — every visual an AI emotion detection in videos product needs to ship.

AI Emotion Detection in Videos — Visual Gallery

Six AI-generated visuals showing exactly the kind of dashboard mockups, character emotion sheets, and marketing graphics every AI emotion detection in videos product needs to ship. Generate your own variations above in seconds.

How AI Emotion Detection in Videos Actually Works

Three layers — perception, classification, and visualization — that turn a raw video file into the dashboards, timelines, and heatmaps users actually look at.

Perception Layer

AI emotion detection in videos starts with face detection and tracking — a model scans every frame, draws a bounding box around each visible face, and follows it across the timeline. Modern AI emotion detection in videos systems also extract 68 to 478 facial landmarks per face, so micro-expressions like a half-smile or a raised eyebrow become measurable. Optional inputs — voice tone via audio, head pose, body language, and gaze direction — feed in alongside the facial signal. This perception layer is invisible to the end user, but it is the foundation that makes every downstream AI emotion detection in videos visualization possible.

Classification Layer

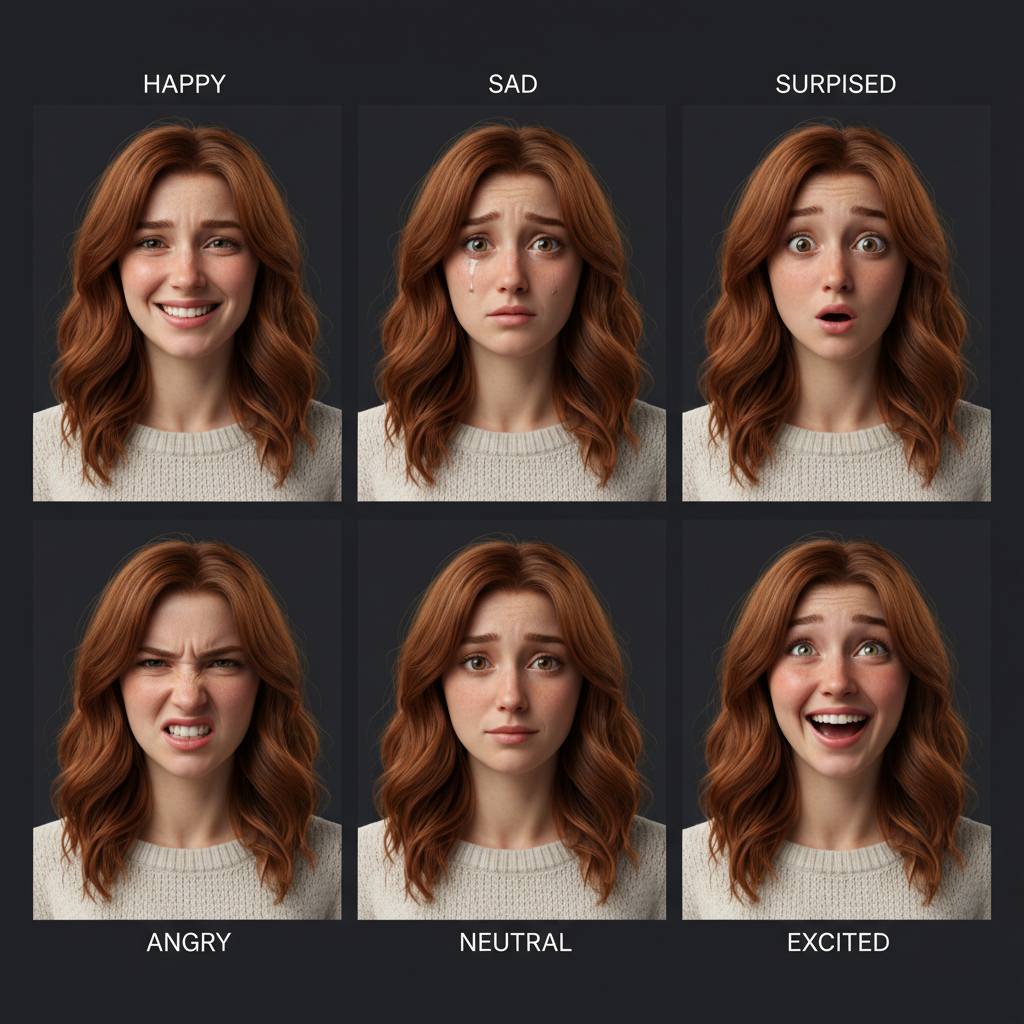

The classification layer maps perception data to emotion labels. AI emotion detection in videos models typically output the seven primary emotions — happy, sad, surprised, angry, fearful, disgusted, neutral — with a confidence score per emotion per frame. Multi-modal AI emotion detection in videos blends facial classification with voice sentiment to reach 5–15 percentage points of additional accuracy. The model output is a per-frame probability vector, not a single label, so downstream products can apply their own thresholds. For example, a marketing analytics product might flag a clip as engaging when joy probability stays above 0.6 for more than 3 seconds.

Visualization Layer

The visualization layer is where AI emotion detection in videos becomes a product — confidence bars, sentiment timelines, heatmaps, character emotion sheets, and use case infographics. This is the layer the buyer actually sees on a landing page, in a pitch deck, or inside the product UI. The visual design language for AI emotion detection in videos has converged on a few standards: bounding boxes for per-face attribution, gradient bars for confidence scores, glowing timelines for sentiment changes, and character grids for marketing. The free AI image tool above generates every one of these visuals in seconds — perfect for AI emotion detection in videos product pages, decks, and onboarding flows.

Six Capabilities Every AI Emotion Detection in Videos Product Needs

The six concrete capabilities buyers expect from any modern AI emotion detection in videos product — and the visual design language that communicates each one clearly.

Facial Expression Analysis

AI emotion detection in videos starts with facial expression analysis — bounding boxes around each face, landmark tracking, and per-emotion confidence scores. The product needs to communicate this clearly: bounding boxes drawn on the live video preview, a confidence-bar column on the side, and a per-frame emotion strip along the bottom. Marketing visuals for AI emotion detection in videos almost always lead with this single hero shot.

Voice Sentiment Layer

Multi-modal AI emotion detection in videos adds voice analysis — tone, pitch, pace, and prosody mapped to emotional valence and arousal. Voice sentiment lifts AI emotion detection in videos accuracy by 5–15 points and is essential for podcast analytics, customer support, and any audio-heavy use case. Visualize voice sentiment with waveform overlays, prosody curves, or a synced timeline running beneath the facial sentiment track.

Audience Reaction Tracking

Audience-side AI emotion detection in videos — measuring how viewers react while watching, not how performers express on camera — is the highest-value use case for ad analytics and content marketing. Multi-face tracking across a webcam grid, aggregated emotion scores, and engagement timelines make audience reaction the showcase visual for any marketing-focused AI emotion detection in videos product page.

Real-Time Detection

Live AI emotion detection in videos — running at 24–60 fps on the device or in the browser — unlocks real-time use cases: live coaching, interview practice, customer support sentiment alerts, and live event audience meters. The visual design language for real-time AI emotion detection in videos uses pulsing bounding boxes, confidence bars that animate frame-by-frame, and a real-time sentiment ticker along the edge of the viewport.

Multi-Person Tracking

Group videos — meetings, panel discussions, classrooms, focus groups — require AI emotion detection in videos systems that track every face independently and attribute emotion changes to the right speaker. Multi-person tracking is non-trivial: faces overlap, partially occlude, and exit and re-enter the frame. The hero visual for multi-person AI emotion detection in videos shows four to eight bounding boxes with per-face emotion labels and unique color attribution.

Sentiment Timeline & Heatmap

The sentiment timeline is the single most-requested AI emotion detection in videos visual on landing pages — a glowing horizontal track showing audience emotion intensity rising and falling across the video duration. Heatmaps extend this into a 2D view: time on the X axis, emotion category on the Y axis, intensity as color saturation. Both are core marketing visuals and product-UI elements that every AI emotion detection in videos team ships eventually.

Where AI Emotion Detection in Videos Ships in 2026

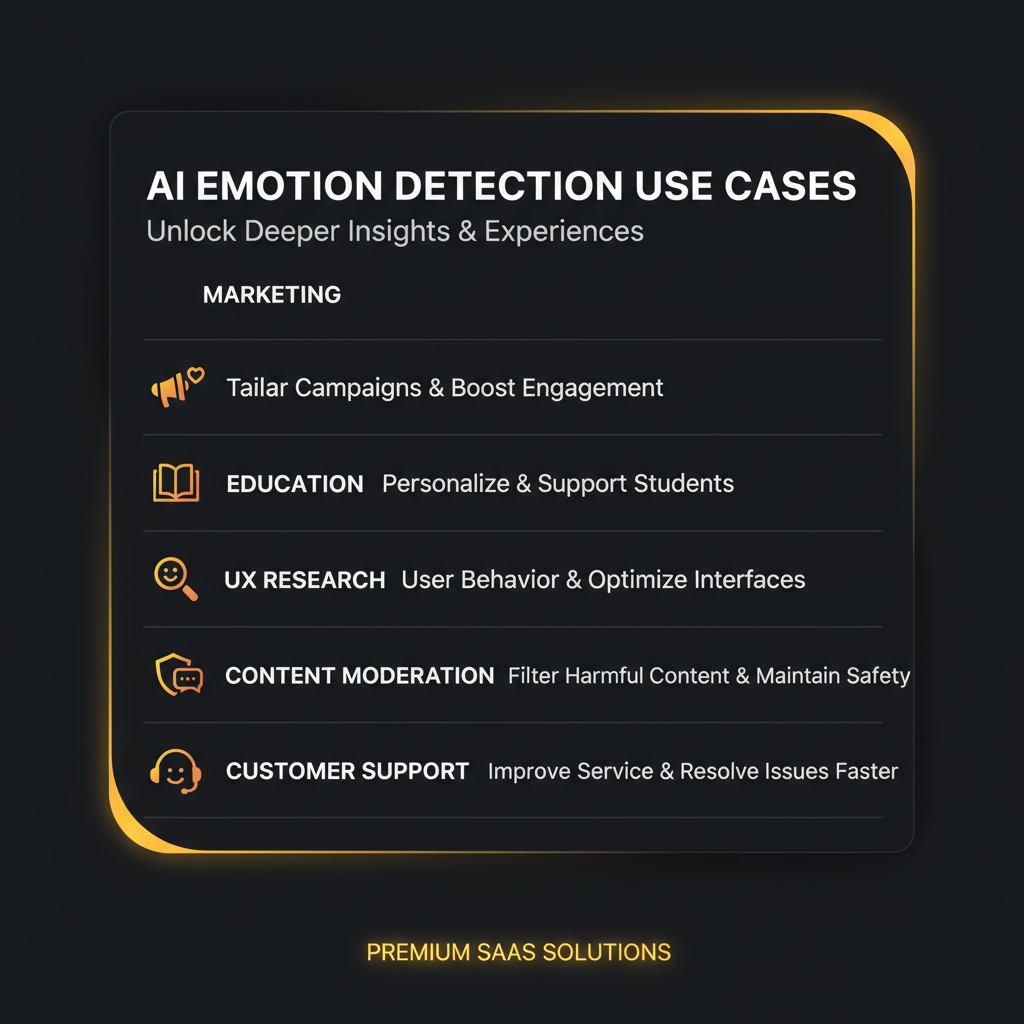

Five mainstream verticals already running AI emotion detection in videos in production — and the specific visual language each one uses on its product pages.

Marketing & Advertising

Brands and agencies use AI emotion detection in videos to measure real-time audience reactions to ad creative, predict ad performance before launch, and optimize creative iterations against an emotion benchmark. Visuals: hero dashboards with audience reaction heatmaps and per-second sentiment timelines.

UX & Product Research

UX teams record user-test videos and use AI emotion detection in videos to surface confused, frustrated, or delighted moments without manually scrubbing every recording. Visuals: timeline annotations, frustration spike alerts, and per-task emotion summaries layered over screen recordings.

Education & E-Learning

Educators apply AI emotion detection in videos to track student engagement during recorded lectures and live classes — flagging confusion, attention drops, and high-engagement moments for review. Visuals: per-student attention dashboards, classroom heatmaps, and lecture-quality scores.

Content Moderation

Trust and safety teams use AI emotion detection in videos to flag distress, fear, or aggression in user-uploaded video content. Visuals: priority queues with emotion-flagged thumbnails, severity scores, and reviewer workflow dashboards purpose-built for high-volume moderation work.

Customer Support & Sales

Support and sales teams analyze video calls with AI emotion detection in videos to score caller sentiment, flag escalation risks, and benchmark agent performance. Visuals: post-call summaries with emotion arcs, agent-level coaching reports, and CRM-integrated emotion timelines for every recorded call.

Media & Sports Analytics

Broadcasters and sports analytics teams use AI emotion detection in videos to score TV broadcasts, study athlete focus and stress, and benchmark commentator engagement. Visuals: per-broadcast emotion timelines, athlete reaction heatmaps, and commentary-quality dashboards.

AI Emotion Detection in Videos FAQ

The seven questions every team building or buying AI emotion detection in videos asks before shipping.

Generate AI Emotion Detection Visuals Free

Whether you are launching an AI emotion detection in videos product, writing a landing page, or building a pitch deck — every dashboard mockup, character emotion sheet, sentiment timeline, and feature grid you need is one prompt away. No signup, no credit card, powered by Nano Banana Pro.

Start Generating FreeAI Emotion Detection in Videos — A Practical 2026 Guide for Content Analysts, Marketers, and Video Editors

What AI Emotion Detection in Videos Actually Means in 2026

AI emotion detection in videos is the application of computer vision and audio analysis to recognize human emotions in video content at the frame level — joy, sadness, surprise, anger, fear, disgust, and contextual signals like engagement or attention. The technology that powers AI emotion detection in videos has matured substantially over the past two years: face detection now runs at 60 fps on consumer GPUs, facial landmark tracking captures 478 distinct points per face, and multi-modal pipelines fuse facial, voice, and contextual cues into a single confidence vector. Accuracy on the seven primary emotions ranges from 80 to 95 percent under good lighting, and 65 to 80 percent in the wild — a level of reliability that has made AI emotion detection in videos production-ready for real customer-facing products in marketing, UX research, education, content moderation, and customer support analytics.

The visual design language for AI emotion detection in videos has converged around a small set of standard elements: bounding boxes drawn on the live video preview, gradient confidence bars for per-emotion scores, glowing sentiment timelines along the bottom of the dashboard, character emotion sheets used in marketing and onboarding, and use case infographics laying out the verticals the product serves. Every AI emotion detection in videos product page eventually needs all of these visuals — the hero dashboard mockup, the character grid, the timeline, the heatmap, the feature grid, and the use case infographic. The free AI image tool above is preset for exactly this visual family. Pick a category, type a short prompt, and the tool returns a polished AI emotion detection in videos visual ready to drop into a landing page, deck, or product UI.

Whether you searched ai emotion detection, video emotion analysis, ai video tools, emotion recognition, ai emotion tracking, sentiment analysis, video content, ai emotion detection in videos, facial expression analysis, video sentiment, audience reaction analytics, ai emotion dashboard, or emotion detection visuals — this page is built to give you the honest 2026 breakdown and a working AI image tool above to start producing the dashboards, character emotion sheets, and marketing graphics your AI emotion detection in videos product launch needs today.

Content Analysts, Marketers, and Video Editors Shipping AI Emotion Detection in Videos

Content analysts use AI emotion detection in videos to surface high-engagement moments inside hours of recorded video — replacing manual scrubbing with a sentiment timeline that points straight to the clips that landed. Marketers measure audience reactions to ad creative in real time, optimize creative iterations against emotional benchmarks, and benchmark campaign performance against historical emotion baselines. Video editors integrate AI emotion detection in videos directly into the timeline UI, jumping from one high-emotion moment to the next while cutting reels, trailers, and YouTube Shorts. UX researchers use AI emotion detection in videos to score user-test recordings automatically, flagging confusion or frustration without watching every second of every session. Educators use it to gauge student attention during recorded lectures and live classes, flagging the exact moments where engagement drops. Trust and safety teams apply AI emotion detection in videos to flag distress, fear, or aggression in user-uploaded content, accelerating moderation queues by surfacing the highest-priority videos first.

Customer support and sales teams analyze recorded video calls with AI emotion detection in videos to score caller sentiment, flag escalation risks, and benchmark agent performance against an emotion baseline. Sports analytics teams study athlete focus and stress across game footage, and broadcasters use AI emotion detection in videos to score TV broadcasts and benchmark commentator engagement. Every one of these AI emotion detection in videos use cases needs the same family of visual assets — hero dashboards, sentiment timelines, character emotion sheets, marketing infographics, audience reaction heatmaps — and every one of those assets is one prompt away in the free AI image tool above. Generate the dashboard mockup for your launch, the character grid for onboarding, the timeline for the pitch deck, and the feature grid for the pricing page in a single afternoon — no design team, no stock-asset license, no signup.

Looking for related AI image tools across the rest of your product launch? /tools/ai-image-generator is the general-purpose Nano Banana Pro image generator, /tools/ai-images-for-educational-content is built for explainer visuals and lecture imagery, and /tools/ai-image-for-social-media-ads handles paid-social creative for the AI emotion detection in videos campaigns you run after launch. Pair them with the AI emotion detection in videos visual tool on this page and the entire visual stack for your launch is unblocked in one afternoon.

AI emotion detection in videos has shipped into mainstream marketing, UX research, education, content moderation, and customer support analytics — and every product around it needs the same visual family. Generate dashboard mockups, character emotion sheets, sentiment timelines, and feature grids free above. Optional plans from $2.99 only when you want unlimited.